The Strategic Intelligence Gap

Consider this scenario: a major automotive manufacturer discovered that a competitor had been actively developing open-source battery management software for eighteen months — information publicly available on GitHub but invisible to their market intelligence team. By the time product teams received competitive analysis reports, the rival had already secured partnerships with three major suppliers. The problem wasn't a failure of intelligence gathering. It was a failure of search architecture.

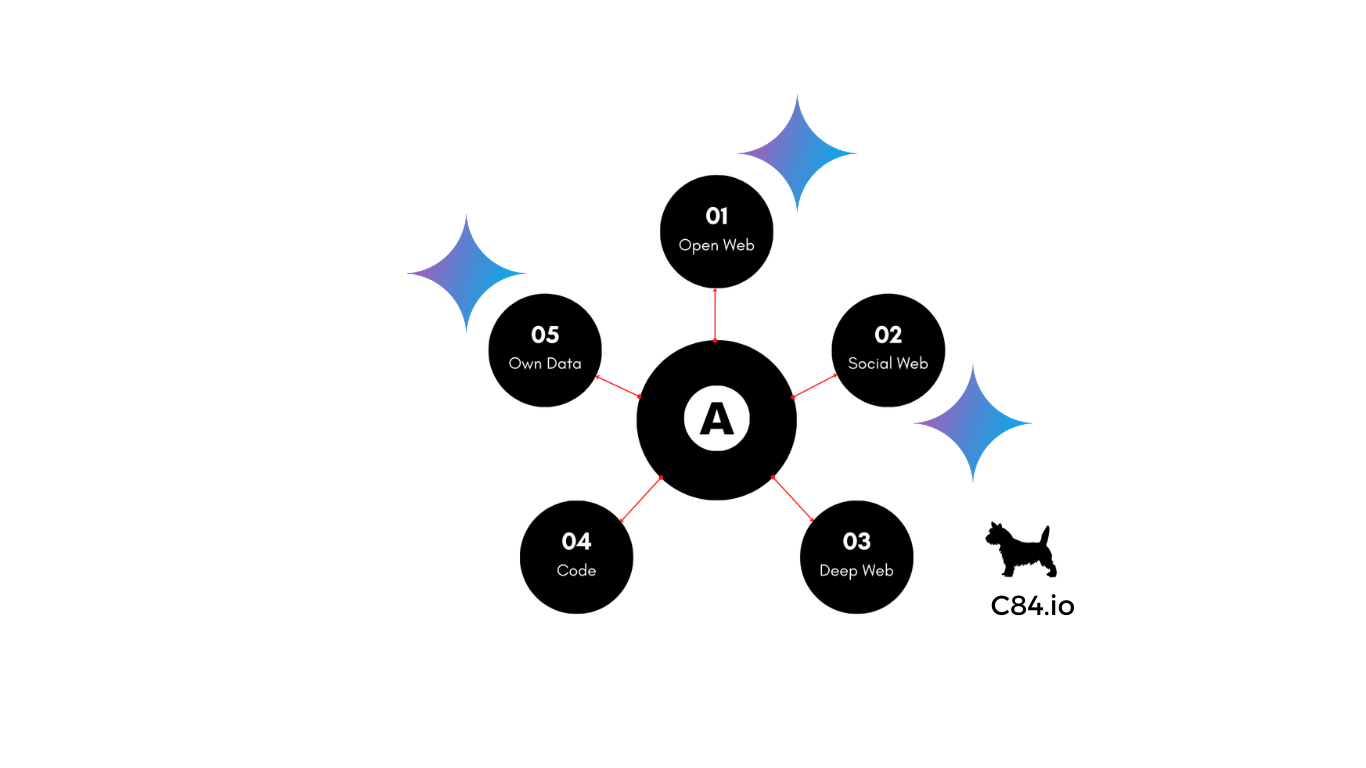

Organizations today operate under a dangerous assumption: that access to information equals intelligence. In reality, the modern data landscape has fragmented into five distinct search ecosystems, each requiring specialized infrastructure, access protocols, and domain expertise. Companies that haven't architected for this fragmentation are systematically blind to threats, opportunities, and market movements happening in plain sight — just in different databases.

This isn't a technology problem. It's a strategic architecture problem with competitive consequences.

Why Traditional Intelligence Frameworks Fail

The dominant model for competitive and market intelligence was designed for a simpler information environment. Teams monitor news feeds, commission market research reports, attend industry conferences, and track competitor announcements. This approach assumes that strategically relevant information flows through established, centralized channels.

That assumption is obsolete.

Consider how strategically significant information now distributes across fundamentally different search infrastructures:

Open Web search engines provide universal access to indexed content, but their scope is limited to what crawlers can reach. Paywalled research, dynamically generated content, and intentionally obscured information remain invisible. The 100% user literacy that makes Google ubiquitous also creates a dangerous over-reliance on a single intelligence layer.

Social platforms function as unrecognized search engines with proprietary indices of user behavior, sentiment, and emerging trends. Instagram's Explore, LinkedIn's talent search, and X's advanced operators all maintain sophisticated databases that reveal customer preferences, talent movements, and market sentiment in real-time. Yet most organizations treat social media as marketing channels rather than intelligence infrastructure.

Deep Web databases contain the richest domain-specific intelligence: academic research, legal filings, industry-specific platforms, regulatory databases, and specialized forums. Each maintains independent indices with unique metadata schemas and access requirements.

Code repositories expose technological development before it manifests in products. GitHub, GitLab, and specialized platforms index programming patterns, dependency graphs, and development velocity. When competitors or open-source communities commit code, file issues, or fork projects, they're broadcasting strategic intentions.

First-party data systems — CRM platforms, ERP systems, support databases, sales analytics — contain the most privileged information about customer behavior and operational performance. But isolated from external context, internal data reveals trends without explaining causes.

The Compounding Cost of Fragmentation

This architectural fragmentation creates three categories of strategic failure:

Latency gaps: Information exists and is accessible, but organizational processes can't retrieve it quickly enough. By the time fragmented intelligence reaches decision-makers, competitors have already acted on the same signals.

Visibility gaps: Information exists but lives in ecosystems where the organization has no search capability. Regulatory changes discussed in specialized forums, technical developments on GitHub, sentiment shifts on social platforms — all invisible to teams querying only traditional sources.

Synthesis gaps: Information fragments exist across multiple systems, but no infrastructure connects them into coherent intelligence. A support ticket spike makes sense only when correlated with social sentiment, code repository activity, and regulatory discussions. Isolated, each data point is noise.

The Multi-Source Intelligence Architecture

Leading organizations are responding not by hiring more analysts but by architecting unified search infrastructure across fragmented ecosystems. This requires three fundamental capabilities:

Federated search orchestration: AI systems that understand which databases contain relevant information and how to query them effectively. This isn't search aggregation — it's intelligent routing that knows whether a question requires Open Web indices, social platform APIs, deep web databases, code repositories, or internal systems.

Cross-system authentication and access: Technical infrastructure that maintains credentials across platforms while respecting access controls, rate limits, and compliance requirements.

Pattern synthesis across heterogeneous data: Analytical frameworks that identify relationships between regulatory changes, technical developments, market sentiment, competitor positioning, and internal performance metrics. This is where AI moves from retrieval to intelligence generation.

From Access to Advantage

The competitive moat isn't access to any single data source — it's the infrastructure to synthesize across all of them systematically. A company monitoring only Open Web sources learns about regulatory changes when they're announced. A company with Deep Web search capability sees those changes discussed in regulatory working groups months earlier. A company with multi-source architecture sees the regulatory discussion, identifies which competitors are already modifying code repositories in response, observes social sentiment shifting, and correlates this with changing customer support patterns — all before competitors using traditional intelligence methods even know the regulation exists.

The strategic question isn't whether organizations can access these five layers. Most can, given sufficient time and resources. The question is whether they can query them simultaneously, continuously, and synthesize results faster than competitors.

Implementation Realities

Building multi-source intelligence architecture requires confronting practical challenges that vendor marketing glosses over. Authentication complexity is the first barrier — maintaining active credentials across dozens of platforms while respecting rate limits, ToS constraints, and geographic access restrictions demands ongoing operational attention. Most organizations underestimate this by an order of magnitude.

Data normalization is the second challenge. Each search ecosystem returns results in proprietary formats with different metadata schemas, confidence signals, and temporal markers. Synthesizing signals across these formats requires substantial data engineering investment before any analytical value is extractable.

The third challenge is relevance calibration. Multi-source architectures generate massive signal volume; without sophisticated filtering, teams drown in noise while missing the strategic signals they were built to surface. Relevance models must be domain-specific, continuously updated, and tuned against actual decision-making needs — not generic information retrieval benchmarks.

Organizations that succeed typically start with a specific, high-value use case — competitor technical monitoring, regulatory change detection, or talent movement tracking — build capability in that domain, and expand systematically. Attempting to build comprehensive multi-source intelligence in parallel across all domains simultaneously is the most common failure pattern.

The Question Ahead

The fragmentation of search architecture isn't a temporary condition waiting to be resolved by a dominant platform. It reflects the underlying structure of how different types of information are produced, governed, and accessed — and that structure is becoming more differentiated, not less. Regulatory pressure on data portability, privacy legislation, and platform competition will accelerate ecosystem fragmentation over the next decade.

Organizations that invest now in multi-source search infrastructure are building a capability that becomes more valuable as fragmentation increases. Those that defer the investment are not preserving optionality — they are falling further behind competitors who have already started the architectural work.

The competitive intelligence question of the next decade isn't "What information can we access?" It's "How quickly can we synthesize across the ecosystems where the most important information lives?" That synthesis capability — not any single data source — is the durable strategic advantage.